TL;DR

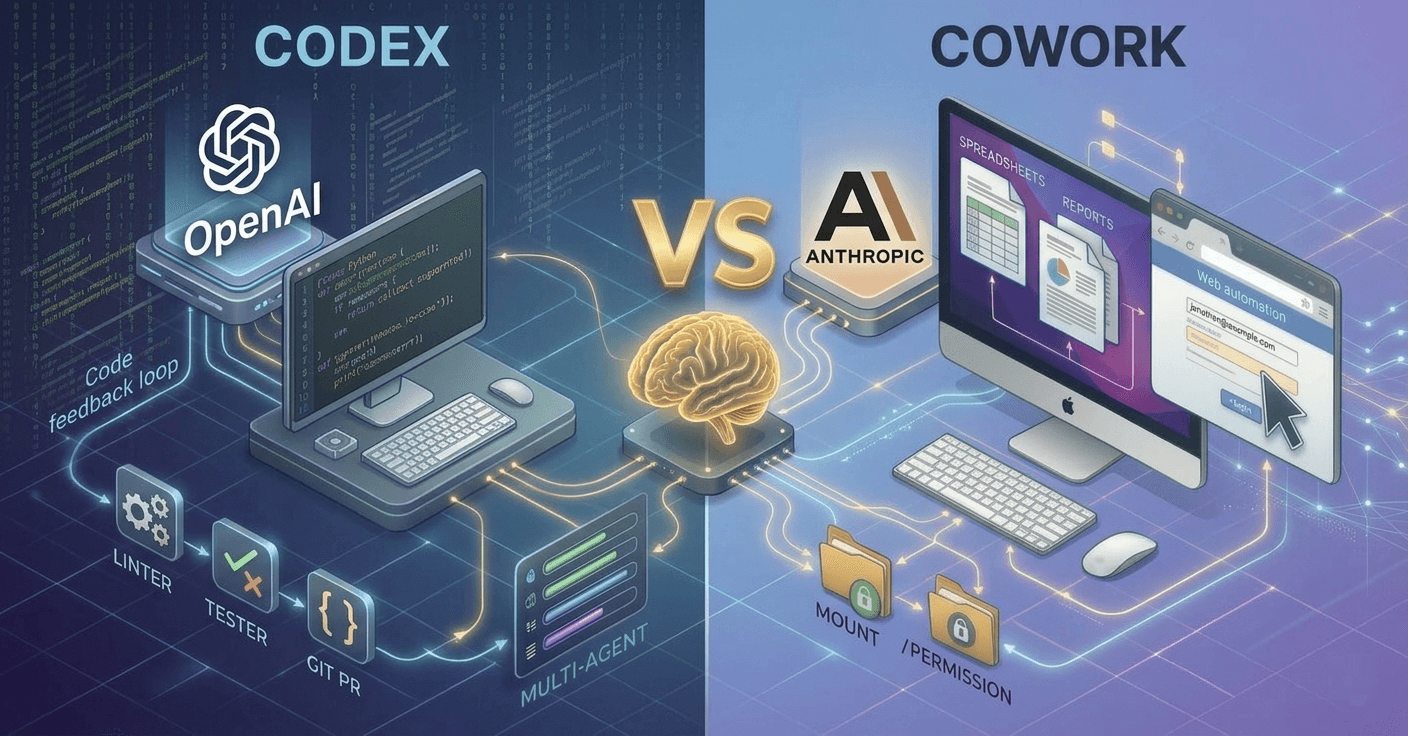

In practice, this isn’t “Codex vs Cowork”—it’s engineering agent vs knowledge-work agent

Codex wins when the workflow is: code → run tests → iterate → PR

Cowork wins when the workflow is: files/docs/sheets → pull context from tools → assemble deliverable

Pricing matters: both start at ~$20/month, but scale costs diverge (API-based automation options vs per-seat / usage tiers)

OpenAI Codex and Anthropic Claude Cowork both represent the shift from conversational AI to agentic AI—systems that don’t just answer questions, but plan and execute work. But they’re not trying to win the same job.

Codex is an autonomous, cloud-based coding agent for software developers.

Cowork is a desktop-based agentic AI for non-technical knowledge workers.

At-a-glance comparison

OpenAI Codex | Claude Cowork | |

|---|---|---|

Best for | Software developers / engineering teams | Knowledge workers / admins / ops / analysts |

Primary function | Autonomous coding agent (code, tests, PRs) | Autonomous knowledge-work agent (files, reports, docs, spreadsheets) |

Launch | May 2025 (web); Feb 2026 (macOS app) | Jan 2026 (research preview in Claude Desktop) |

Status | Production | Research preview |

Underlying model | GPT-5.3-Codex | Claude Opus 4 / Sonnet 4 |

Where it runs | Cloud sandbox (isolated containers) | Local Linux VM (Ubuntu 22 on Apple silicon) |

Data residency default | Your repo/code is processed in cloud sandboxes | Work runs locally; you mount only folders you allow |

Internet during tasks | Disabled (sandbox isolation) | Routed through strict allowlist proxy |

Multi-agent / parallel work | Yes — parallel agents in desktop app | No — single task at a time |

Browser automation | No | Yes — via Claude in Chrome extension |

Integrations focus | GitHub + dev tools (IDEs/CI/CD/skills) | Productivity suite + connectors (Google/Notion/Slack + MCP ecosystem) |

Extensibility | Repo-scoped guidance (e.g., AGENTS.md) + Skills library | Markdown-based plugins + open marketplace |

Platform availability | Web + macOS app + IDE integrations (VS Code/Cursor/Windsurf) | macOS only (Claude Desktop) |

Starting price | $20/mo (ChatGPT Plus) | $20/mo (Claude Pro) |

Scaling model | Enterprise plans + API option for automation | Seat + usage tiers (Max tiers; Premium seat pricing) |

What does “agentic AI” actually mean?

Agentic AI is AI that can:

interpret a goal, 2) form a plan, 3) take actions, 4) verify progress, and 5) deliver an outcome.

A simple contrast:

Conversational AI: “Here’s an answer.”

Agentic AI: “Here’s the plan. I did the steps. Here are the outputs. Approve next actions.”

Both Codex and Cowork are agentic. They just target different “jobs to be done.”

What is OpenAI Codex: an engineering agent, not a chat feature

Codex operates like a full software engineering agent. You assign a task in natural language—e.g., “add pagination to the user list endpoint”—and it attempts to complete it end-to-end:

edits code

runs linters/type checkers

runs your test suite

iterates until tests pass

proposes a pull request / change set

Codex’s defining advantage is the feedback loop. Many tools can generate code. Codex is designed to run the loop—execute, verify, iterate—inside a controlled environment.

When Codex is the right tool

well-scoped features

bug fixes with clear failure states

test-writing and coverage

refactors guided by tests and conventions

parallelizing multiple small tasks (when multi-agent is used)

When Codex is the wrong tool

workflows that aren’t primarily code-centric (reports, spreadsheets, multi-tool ops)

ambiguous “figure it out” engineering tasks with unclear acceptance criteria

anything that needs rich cross-department context (marketing → sales → product) more than test-driven iteration

What is Claude Cowork: a knowledge-work agent, not a developer tool

Cowork is built to automate the kind of “glue work” that dominates modern operations:

organize and transform files

create documents and reports

extract data from receipts/PDFs/images

automate multi-step workflows across locally installed productivity apps

It runs in a local Linux VM on your Mac. You explicitly mount folders, and it can use a connector ecosystem (including MCP-style connectors) to interact with SaaS tools. With Claude in Chrome, it can also automate some browser actions (within constraints like CAPTCHAs/logins requiring human intervention).

When Cowork is the right tool

reporting workflows (data → analysis → narrative → deliverable)

“extract → structure → export” tasks (receipts, PDFs, spreadsheets)

working with local files

browser-driven ops tasks (research, form filling, authenticated portals)

When Cowork is the wrong tool

engineering workflows that require test harness iteration, PR creation, and IDE-native integration

high-concurrency automation needs (if you need many parallel agents)

you have expert customization needs

Pricing & packaging (non-feature reality check)

Capabilities matter, but pricing and scaling model determine what you can actually roll out.

Here’s the practical pricing comparison you should keep in mind (as of Feb 2026, per your provided notes):

Quick pricing snapshot

OpenAI Codex | Claude Cowork | |

|---|---|---|

Entry | $20/mo (ChatGPT Plus) | $20/mo (Claude Pro) |

Power tiers | ChatGPT Pro (higher limits) | Claude Max 5x — $100/mo; Max 20x — $200/mo |

Team/enterprise | ChatGPT Business / Enterprise | Premium Seat — ~$125/mo ($100 annual) |

API automation | Yes (e.g., codex-mini per-token pricing) | Cowork is inside Claude Desktop; API is separate |

How to think about cost (buyers + operators)

If you’re an individual developer, Codex bundled with ChatGPT Plus can be hard to beat on cost/value.

If you’re an individual operator/analyst, Cowork inside Claude Pro offers similar entry economics.

At team scale, the model diverges: engineering orgs may value Codex’s API options for programmatic automation; Cowork is exclusively per-user oriented for individual knowledge workflows.

Execution environment & security: cloud isolation vs local isolation

This isn’t just “where it runs.” It affects what the agent can access, how approvals work, and what your governance team will care about.

Codex: cloud sandboxes

tasks run in isolated containers in the cloud

repository is cloned into the sandbox

internet may be disabled during tasks (reduces exfiltration risk, but limits dependency fetching)

permission modes control what the agent can do

Tradeoff: strong sandbox isolation, but the work executes off-device.

Cowork: local VM + explicit mounts

runs a Linux VM locally on your Mac

you mount specific local folders (permissioned access)

network traffic is controlled via allowlist-style proxying

Tradeoff: local execution with explicit access boundaries, but the product is desktop-centric and macOS-first.

Bottom line: If cloud execution is acceptable and you want developer-grade CI/test loops, Codex fits. If local execution and file-level permissioning are central to your use case, Cowork’s architecture is meaningful.

Integrations: dev stack vs productivity stack

Codex is strongest where engineering work lives

GitHub workflows (branches/PRs)

IDE ecosystem (VS Code, Cursor, Windsurf)

CI/CD and test harnesses

developer-oriented “skills” integrations (e.g., design/project/deploy touchpoints)

Cowork is strongest where individual knowledge work lives

Local files

Office productivity applications

broad connector ecosystems for cloud services, MCP, and marketplaces

browser automation to operate inside web apps

A simple rule: if your system of record is code, choose Codex. If your system of record is local docs/data/tools, choose Cowork.

Extensibility: repo-scoped rules vs portable plugins

Codex customization is repo-centered

Codex is guided through project instructions and conventions (e.g., AGENTS.md). That’s exactly what developers want: keep behavior aligned with the repo.

Cowork customization is workflow-centered

Cowork plugins bundle skills, connectors, commands, and sub-agents, designed to be shared and installed without heavy dev tooling. That matches the needs of ops and knowledge teams.

Multi-agent vs single-thread: throughput vs orchestration

Codex supports parallel agents. This matters when work is modular and you want multiple tasks moving at once.

Cowork is single-task execution today, which is often fine for individual knowledge workflows where stitching together a process on your local computer is the bottleneck—but it’s a real limitation if you want concurrency.

If you’re managing a backlog, multi-agent parallelism can be a direct productivity multiplier.

Browser automation: Cowork has it, Codex doesn’t

Codex: no browser automation; it’s designed for code sandboxes.

Cowork: uses Claude in Chrome for web automation (clicking, form filling, data extraction), with human intervention for CAPTCHAs/logins.

If your workflows live behind logins in web apps, browser automation can be the difference between “cool demo” and “actually useful.”

Who should choose what in 2026?

Choose OpenAI Codex if you want:

an autonomous coding agent that runs tests and iterates

parallel agents for engineering throughput

deep GitHub/IDE alignment

a developer-first workflow that outputs PR-ready changes

Choose Claude Cowork if you want:

an agent for docs/spreadsheets/files/reporting

local execution with explicit folder-level access

browser automation for real-world web tasks

novice friendly extensibility (plugins)

Choose both if you’re a typical modern organization

Most companies have both engineering and knowledge workflows. In that reality, “standardizing on one agent” often creates forced fit and frustration. A more practical approach is:

Codex for engineering automation

Cowork for individual knowledge-work automation

clear internal guidelines so automation doesn’t become invisible and ungoverned

The bottom line

OpenAI Codex and Claude Cowork aren’t truly head-to-head competitors. They’re specialists in different domains of agentic work:

Codex automates software development loops.

Cowork automates individual knowledge work across files, tools, and the browser.

If you write code for a living, evaluate Codex first. If you spend your days in Microsoft O365 and documents, Cowork is the more direct fit. If you run an organization that needs both, the winning move is choosing intentionally—by workflow, not by hype.

FAQ

What’s the difference between OpenAI Codex and Claude Cowork?

Codex is a cloud-based coding agent for developers (code, tests, PRs). Cowork is a desktop-based knowledge-work agent (files, docs, sheets, cross-tool automation) running in a local Linux VM.

Are Codex and Cowork competitors?

Not in the usual sense. They overlap in “agentic AI,” but target different users and workflows. Many companies will adopt both.

Which one is “more secure”?

It depends on your risk model. Codex uses cloud sandbox isolation (with constrained internet). Cowork uses local VM isolation with explicit folder mounts and an allowlist proxy. Evaluate data residency needs, access controls, and what each agent can touch.

Which is better for enterprise adoption?

Capability-wise, Codex fits engineering orgs that want autonomous test-driven iteration and PR workflows. Cowork fits knowledge teams that need local files access, connectors, document workflows, and browser automation. For enterprise rollout, packaging/pricing and platform constraints also matter.

Do these agents replace humans?

They’re best used as force multipliers. Humans still define goals, provide context, review outputs, and own decisions—especially for anything high-stakes.

Workstation

Team